Mapper

The map function in Mapper reads row by row of Input File

Combiner

The Combiner wont be called when the call to the Reducer class is not there in Driver class.

Reducer

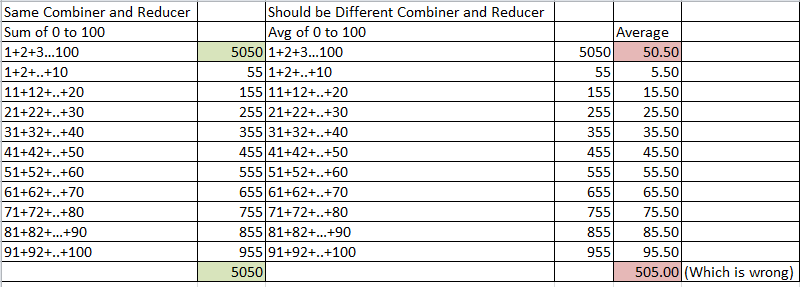

The Reducer and Combiner need not do the same thing as in case of average of 0 to 100 Numbers.

Input to Mapper

1/1/09 1:26,Product2,1200,Nikki,United States

1/1/09 1:51,Product2,1200,Si,Denmark

1/1/09 10:06,Product2,3600,Irene,Germany

1/1/09 11:05,Product2,1200,Janis,Ireland

1/1/09 12:19,Product2,1200,Marlene,United States

1/1/09 12:20,Product2,3600,seemab,Malta

1/1/09 12:25,Product2,3600,Anne-line,Switzerland

1/1/09 12:42,Product1,1200,ashton,United Kingdom

1/1/09 14:19,Product2,1200,Gabriel,Canada

1/1/09 14:22,Product1,1200,Liban,Norway

1/1/09 16:00,Product2,1200,Toni,United Kingdom

1/1/09 16:44,Product2,1200,Julie,United States

1/1/09 18:32,Product1,1200,Andrea,United States

Output of Mapper

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {3600} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {3600} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {3600} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product1}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product1}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product2}

Value = Product Price {1200} Product No {1}

----------------------------

Key = Product Date {2009-01} Product Name {Product1}

Value = Product Price {1200} Product No {1}

----------------------------

Magic of Framework happens Here

Input of Combiner

----------------------------

Key = Product Name {2009-01} Product No {Product1}

Values

Product Price 1200

Product No 1

Product Price 1200

Product No 1

Product Price 1200

Product No 1

----------------------------

Key = Product Name {2009-01} Product No {Product2}

Values

Product Price 1200

Product No 1

Product Price 1200

Product No 1

Product Price 3600

Product No 1

Product Price 1200

Product No 1

Product Price 1200

Product No 1

Product Price 3600

Product No 1

Product Price 1200

Product No 1

Product Price 1200

Product No 1

Product Price 1200

Product No 1

Product Price 3600

Product No 1

----------------------------

Values added together in Combiner based on Key

key 2009-01 Product1

productPrice 1200

productNos 1

----------------------------

productPrice 2400

productNos 2

----------------------------

productPrice 3600

productNos 3

----------------------------

key 2009-01 Product2

productPrice 1200

productNos 1

----------------------------

productPrice 2400

productNos 2

----------------------------

productPrice 6000

productNos 3

----------------------------

productPrice 7200

productNos 4

----------------------------

productPrice 8400

productNos 5

----------------------------

productPrice 12000

productNos 6

----------------------------

productPrice 13200

productNos 7

----------------------------

productPrice 14400

productNos 8

----------------------------

productPrice 15600

productNos 9

----------------------------

productPrice 19200

productNos 10

----------------------------

Output of Combiner and Input to reducer

Key = Product Name {2009-01} Product No {Product1}

value = Product Price {3600} Product Nos {3}

----------------------------

key = Key = Product Name {2009-01} Product No {Product2}

Value = Product Price {19200} Product Nos {10}

Output of Reducer

Key = Product Name {2009-01} Product No {Product1}

Value = AvgVolume {1200} NoOfRecords {3}

----------------------------

Key = Product Name {2009-01} Product No {Product2}

Value = AvgVolume {1920} NoOfRecords {10}

----------------------------